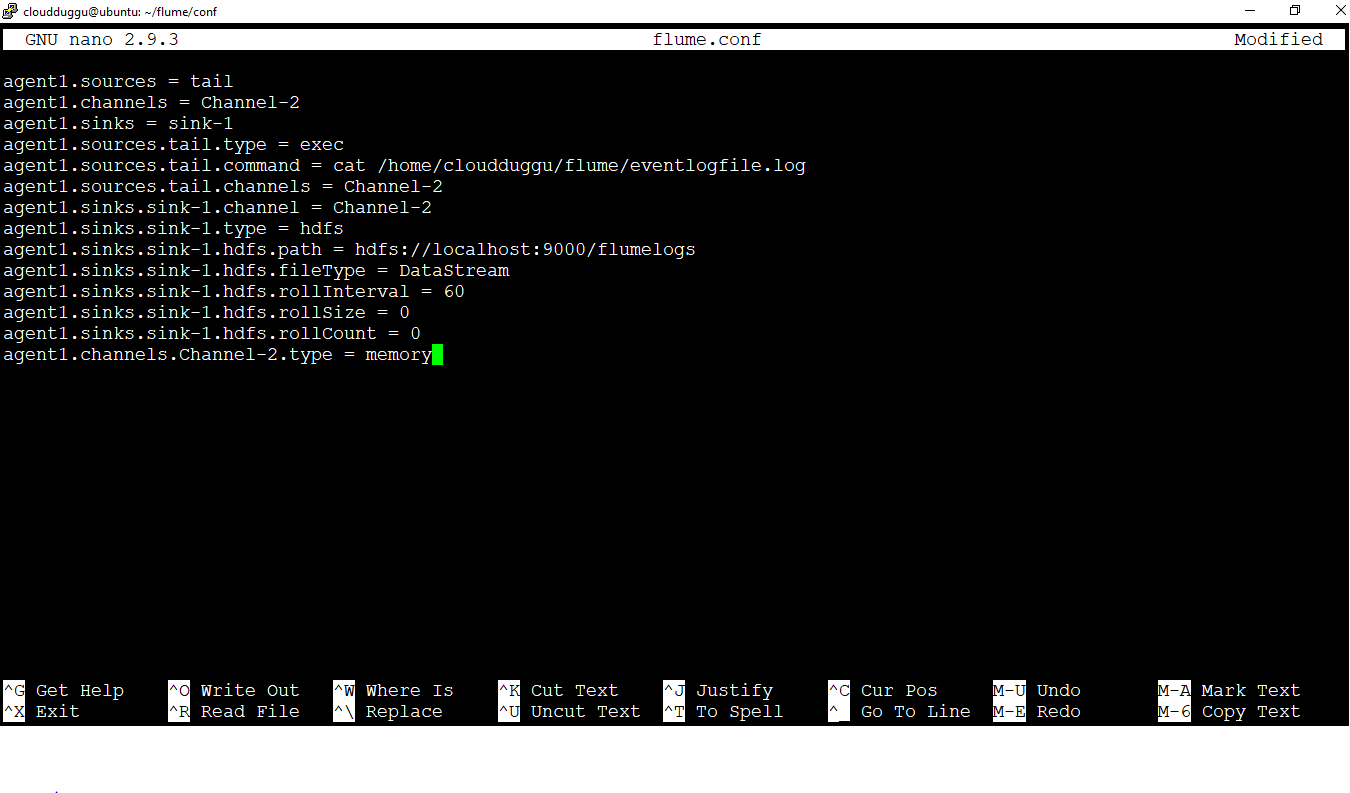

Here is the diagram for both Producer and Consumer. We have also seen above how to write on the HDFS Sink. We have already seen the configuration for Flume. Here is the configuration file for the Flume with Kafka in order to act as Producer: a1.sources = r1Ī1.sources.r1.command =cat /home/indium/dek.csvĪ1. = 100Ī1.sources.r1. = replicatingĪ1. = .kafka.KafkaSinkĪ1. = localhost:9092 Use Flume Source to write to Kafka topic. Start Flume to copy data to store in HDFS Sinkīin/flume-ng agent –conf conf –conf-file conf/flume-conf.properties =DEBUG,console –name a1 -Xmx512m -Xms256m What are the best practices for Flafka? The following is the Flume configuration: a1.sources = r1Ī1.sources.r1.type = .kafka.KafkaSourceĪ1.sources.r1.zookeeperConnect = localhost:2181Ī1.sources.r1.spoolDir = /tmp/kafka-logs/ The Kafka source can be combined with any Flume sink, making it easy to write Kafka data to HDFS, HBase, etc. Use the Kafka source to stream data in Kafka topics to Hadoop. Download and install Apache Flume in your machine and start the Apache Flume in your local machine. Execute command for the producer in the Kafka topicīin/kafka-console-producer.sh –broker-list localhost:9092 –topic kafkatestĥ. bin/kafka-topics.sh –create –zookeeper localhost:2181 –replication-factor 1 –partitions 1 –topic kafkatestĤ. Here is the command for creating the topic in Kafka Here the Flume acts as Consumer and stores in HDFS.īin/kafka-server-start.sh config/server.propertiesģ. And integration of both is needed to stream the data in Kafka topic with high speed to different Sinks. In Kafka, the Flume is integrated for streaming a high volume of data logs from Source to Destination for Storing data in HDFS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed